Build a system where multiple specialized agents collaborate to answer questions about your data safely. Not a SQL chatbot. A trusted data access layer.

Image by author, created with draw.io

TL;DR: Accuracy without governance is a liability. This post builds a 5-agent system where Security has unconditional veto power, agent teams are formed dynamically per query, and not every agent runs every time. A dangerous query gets rejected before it ever reaches the SQL generator.

Context from Part 1 & 2

In Part 1, I covered why naive text-to-SQL fails: hallucinated joins, wrong column interpretations, no access control, silent wrong answers that look correct.

In Part 2, I solved the token waste problem with schema pruning: reducing 8,414 tokens to 166 for simple queries.

This post adds the missing layer: multi-agent orchestration. Not just fewer tokens, but safer decisions.

The Five-Agent System

What Each Agent Does

| Agent | Role | Key Capability |

|---|---|---|

| Orchestrator Agent | Decision Maker | Routes requests, forms agent teams, resolves conflicts between agents |

| Query Refinement | Clarifier | Converts vague business language into machine-readable intent, i.e. resolves “last quarter” to Q4 2025, “my revenue” to your account’s revenue |

| Security & Governance | Gatekeeper (veto power) | Checks permissions, PII exposure, read-only status, governance policies. If it says “no”, the answer is “no”, even if other agents disagree |

| Schema Intelligence | Context Selector | Uses the schema pruning logic from Part 2 to select only relevant tables. Sending the full schema is context pollution. This agent eliminates it |

| SQL Generation | Specialist | Generates SQL, validates against the pruned schema and golden query patterns, regenerates if needed (up to 2 retries). Parameterized queries only |

Note: In illustrations that follow, these agents will be referred to by their short forms: Refinement for the

Query Refinement Agent, SQL Gen for theSQL Generation Agentand so on.

How They Collaborate

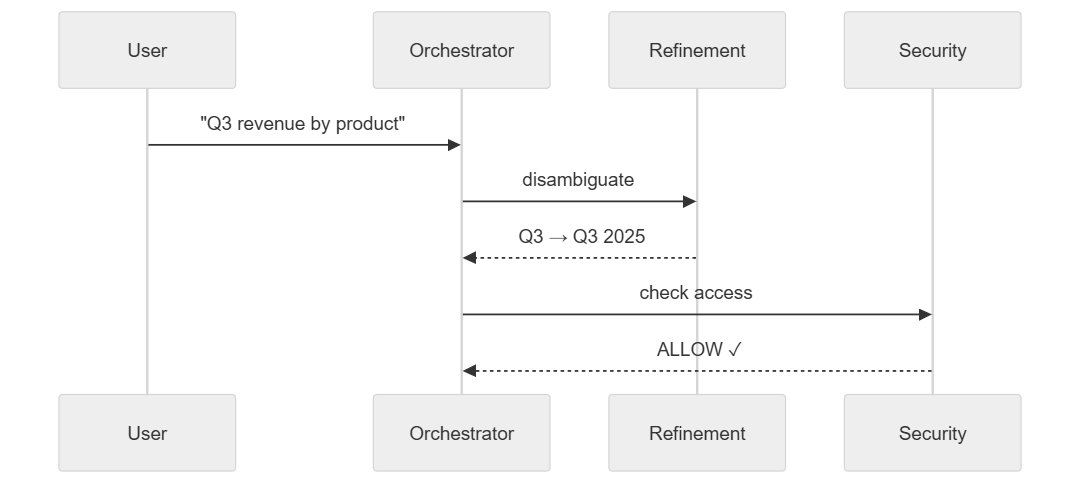

Example: “Show me Q3 revenue by product.”

The Orchestrator Agent analyzes the query and forms a team: Query Refinement Agent → Security & Governance Agent → Schema Intelligence Agent → SQL Generation Agent.

Query Refinement Agent resolves “Q3” to Q3 2025. Security & Governance Agent checks access, PII, and read-only status. The query passes.

- The

Query Refinement Agentresolves “Q3” to Q3 2025 (the last completed quarter). Security & Governance Agentconfirms the user has access tosales_ordersandproducts, the query is read-only, and no PII is exposed. Risk score: 0.15 (low).

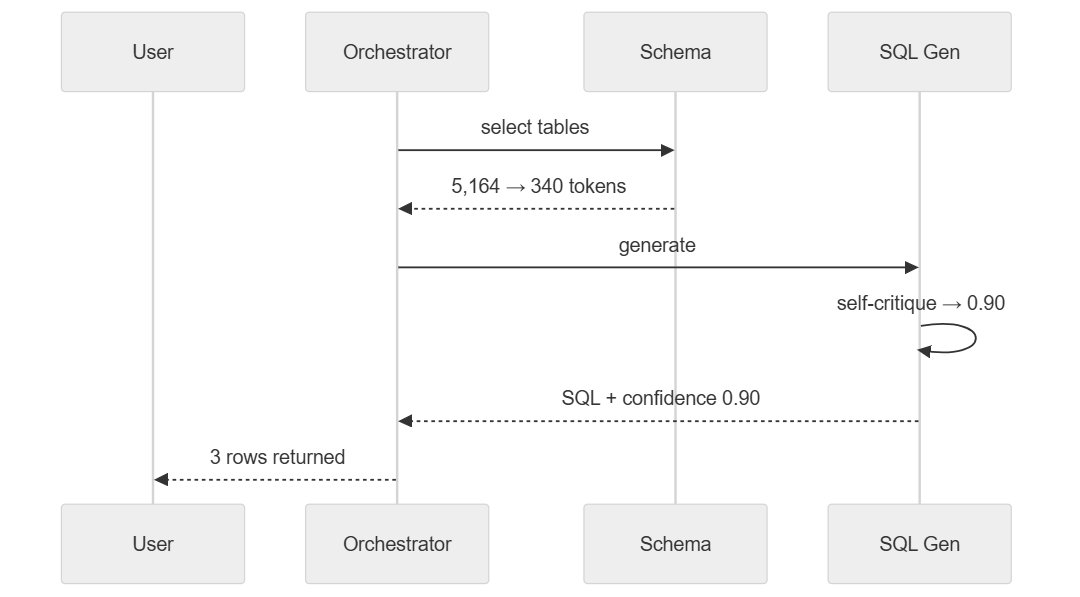

Schema Intelligence Agent compacts from 5,164 to 340 tokens. SQL Generation Agent writes the query, validates it, and returns with confidence 0.90.

- The

Schema Intelligence Agentextracts entities (revenue,product,Q3 2025), identifies 3 tables (sales_orders,products,sales_order_items), and compacts the context from 5,164 to 340 tokens. - The

SQL Generation Agentwrites the query:

SELECT p.product_id, SUM(so.total_amount) as revenue

FROM products p

JOIN sales_order_items soi ON p.product_id = soi.product_id

JOIN sales_orders so ON soi.sales_order_id = so.sales_order_id

WHERE EXTRACT(QUARTER FROM so.order_date) = 3

AND EXTRACT(YEAR FROM so.order_date) = 2025

GROUP BY p.product_id

ORDER BY revenue DESC

The pipeline returns with confidence 0.90: syntax valid, logic correct, join path verified (products ← order_items → orders).

| product_id | revenue |

|---|---|

| PRD-0417 | $142,300 |

| PRD-0891 | $98,750 |

| PRD-0233 | $67,200 |

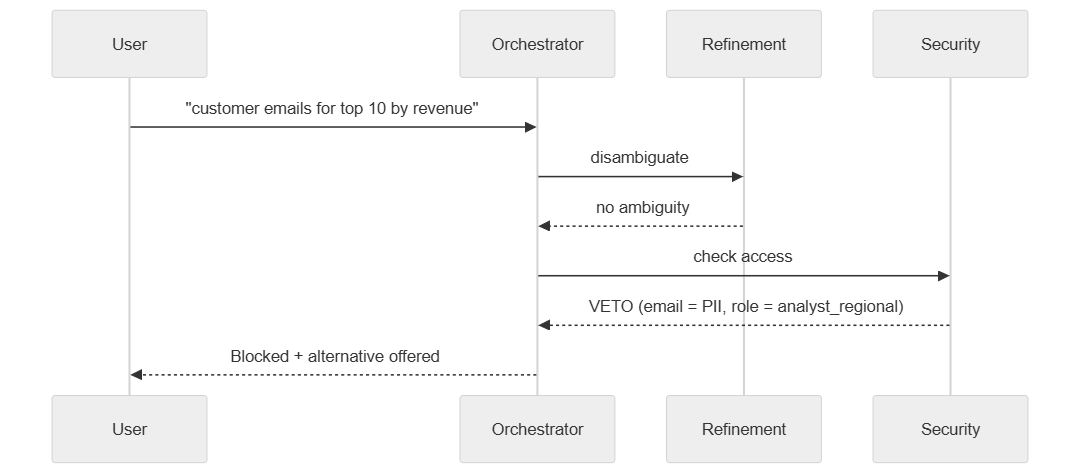

Now consider: “Show me customer email addresses for the top 10 customers by revenue.”

Schema Intelligence Agent and SQL Generation Agent are never activated.

The Security & Governance Agent blocks it. Email is classified as PII. The user’s role (analyst_regional) doesn’t have access. The pipeline stops. Schema Intelligence Agent and SQL Generation Agent are never activated. The system offers an alternative: “Show me top 10 customer IDs and order counts instead.”

i.e. Security isn’t a speed bump. It’s final.

Conflict Resolution

What happens when agents disagree, but neither has veto power?

Scenario: Schema Intelligence Agent vs SQL Generation Agent

- The

Security & Governance Agentclears the query. - The

Query Refinement Agentclarifies it. - The

Schema Intelligence Agentselects 4 tables. - The

SQL Generation Agentsays: “I need a 5th table, the marketing_campaigns table, because the user asked about ‘products with active campaigns’.” - The

Schema Intelligence Agentdidn’t think campaigns were necessary. TheSQL Generation Agentdid. - The

Orchestrator Agentmediates:- Asks

Schema Intelligence Agent: “Why is campaigns not included?” → “The query doesn’t mention campaigns.” - Asks

SQL Generation Agent: “Why do you need it?” → “The refined query says ‘active campaigns’, and the FK from products to campaigns exists.”

- Asks

- Decision:

Schema Intelligence Agentwas wrong. Include campaigns.

i.e. Agents challenge each other. The Orchestrator is the arbiter.

Failure Modes Caught by This System

Silent Wrong Answers

Failure Mode 1 (from Part 1):

🧑🏼💼 Decision Maker: “What are our best-selling products?”

| The Naïve System | With The Agentic System |

|---|---|

| Picked “highest quantity” over “highest revenue” and never asked which one the user meant | The Query Refinement Agent flags “best-selling” as ambiguous and asks: “Did you mean by quantity sold or by revenue?” If the query proceeds without clarification, SQL Generation Agent’s confidence drops to 0.45. |

Hallucinated Joins

From Part 1, hallucinated joins were one cause of silent wrong results:

| The Naïve System | With The Agentic System |

|---|---|

Joined manufacturing.products with ecommerce.orders across domains, even though the FK path was invalid |

The Schema Intelligence Agent will not suggest cross-domain joins unless the FK relationship is unambiguous (like shared customer_id). If ambiguous, the Orchestrator Agent asks the user to clarify. |

Destructive Queries

Failure Mode 2 (from Part 1):

🦹🏼 Malicious Actor: “Delete all customers who’ve not ordered in last 6 months”

| The Naïve System | With The Agentic System |

|---|---|

Generated a valid DELETE FROM customers statement and would have executed it. No read-only enforcement, no write-blocking, no confirmation step. |

The Security & Governance Agent blocks all non-SELECT queries. The system is read-only by default. Configurable exceptions require explicit policy rules. |

Access Control Violations

Failure Mode 3 (from Part 1):

👩🏼💼 User (marketing analyst): “Show me all customer emails, phone numbers, and their lifetime value”

| The Naïve System | With The Agentic System |

|---|---|

| No concept of permissions. Any user could query any table. Treated every user as a database administrator. | The Security & Governance Agent enforces RBAC. Each user role has a policy: “analyst_regional can access APAC sales data, not EMEA.” Queries that violate this policy are blocked, never executed. |

Why Not Chase Benchmark SOTA?

Multi-agent text-to-SQL is a well-studied problem. Several systems achieve strong results on Spider and BIRD, the two standard benchmarks. The question: why not just use one of these?

MAC-SQL [3] uses three agents. Scores 59.6% on BIRD, 82.8% on Spider. The closest architecture to this system:

- Selector → Schema Agent

- Decomposer → SQL Generation

- Refiner → self-critique loop

CHESS [4] adds a Unit Tester that generates expected-behavior descriptions (“result should contain exactly one row”) and scores SQL candidates against them. Scores 71.1% on BIRD, 87.2% on Spider. Removing the revision tool drops accuracy by 6.8%, i.e. self-correction is not optional in high-performing text-to-SQL systems.

- Candidate Generator → SQL Generation (with up to 3 revisions using execution feedback)

- Unit Tester → no direct equivalent. CHESS validates by generating expected-behavior descriptions; this system validates against golden queries (as covered in Part 1)

CHASE-SQL [5] holds the current non-RL BIRD record at 73.0%. Generates candidates via three diverse strategies, then a fine-tuned binary classifier picks the best one, i.e. diverse generation plus trained selection.

- Fine-tuned classifier → this system uses LLM self-critique instead, i.e. deployable on day one, no labelled data

All three optimize for benchmark accuracy. None includes access control. None detects PII. None blocks destructive queries. None tracks provenance.

The risk is real: text-to-SQL models can be backdoored [15], prompt injection leads to SQL injection [16], and fine-tuning for safety-awareness is still early [14]. This system takes a simpler route: put a Security Agent with veto power in front of the SQL generator and never let a query through without it.

The architecture reflects that choice:

- Isolate Security upstream of generation, i.e. keep it as a separate agent with unconditional veto power → no prompt can bypass it

- Separate Schema pruning from SQL Generation, i.e. one operates on the schema graph, the other on the query → different inputs, different failure modes, different reasons to break

- The ablation backs this up: removing Security is the only change that breaks outcomes. Refinement and Self-Critique turn out to be conditional contributors, not always-on necessities → the architecture supports five agents, but production tuning might activate three or four depending on query distribution

- The pipeline is sequential because each agent’s output is the next agent’s input. The only parallelizable pair (Refinement and Security) would save ~50ms, not worth the concurrency overhead

The tradeoff is explicit. CHESS would outscore this system on BIRD. This system would block the queries CHESS would happily execute: PII exposure, unauthorized table access, destructive operations. In production, accuracy without governance is a liability.

Implementation: The Pydantic AI Approach

Why Pydantic AI

This system needs four things from its agent framework:

- Structured output validation so every agent returns a typed Pydantic model (

SecurityResult,SchemaContext,SQLOutput), not free-form text. When the LLM returns malformed JSON, Pydantic AI sends the validation errors back to the model and retries automatically. - Dependency injection so the same agent code runs against a real database in production and a mock in tests, with no code changes.

- Native MCP support for Part 5’s dynamic schema discovery.

- API stability. Pydantic AI’s V1 (September 2025) commits to no breaking changes until V2, with six months of security patches after that [12]. LangGraph only reached V1.0 stable in October 2025 [13]. If you have lived through LangChain’s early breaking-change cycles, you know why this matters.

No other framework provides all four. LangGraph and CrewAI have since added structured output support, but neither integrates validation and retry at the agent level the way Pydantic AI does natively. CrewAI also has documented reliability issues in production [8]. AG2 is built for research, not production pipelines. LlamaIndex is complementary (retrieval), not competing.

ThoughtWorks places Pydantic AI in their “Trial” ring [7]. For deeper comparison: [9] [10] [11].

Agent Architecture

The Orchestrator forms a dynamic team based on query analysis, then runs agents sequentially. Any agent can veto.

team = await self._form_team(analysis)

for agent_name in team['sequence']:

result = await self.agents[agent_name].execute(

request=request,

previous_results=intermediate_results,

context=self.conversation_state

)

# Security Agent can veto, halts the entire pipeline

if result.get("veto_reason"):

return await self._escalate_to_human(request)

intermediate_results[agent_name] = result

SQL Generation: Validation and Retry

After generating SQL, the SQL Generation Agent optionally runs a second LLM call that reviews the output against the pruned schema and refined query. If the review finds issues, the agent regenerates with decayed confidence.

sql = (await self._gen_agent.run(refined_query, pruned_schema)).data.sql

confidence = 0.9

for attempt in range(MAX_RETRIES):

critique = await self._critique_agent.run(sql, pruned_schema, refined_query)

if critique.data.is_valid:

break

confidence -= CONFIDENCE_DECAY

sql = (await self._gen_agent.run(refined_query, pruned_schema)).data.sql

The critique call returns a structured response. A passing review:

{

"is_valid": true,

"issues": [],

"checks": ["syntax_valid", "joins_match_fk_paths", "columns_exist_in_schema"]

}

A failing review (e.g. a hallucinated column):

{

"is_valid": false,

"issues": ["Column 'total_revenue' does not exist in 'sales_orders'. Did you mean 'total_amount'?"],

"checks": ["syntax_valid", "joins_match_fk_paths", "columns_exist_in_schema"]

}

On failure, the agent regenerates with the issue fed back as context. Confidence decays with each retry, i.e. if the agent cannot produce valid SQL within 2 retries, the low confidence signals the Orchestrator to escalate rather than return suspect results.

The same retry mechanism handles LLM failures. If the LLM returns malformed JSON that fails Pydantic validation, the agent treats it identically to a failed critique: log the error, decay confidence, retry. If all retries are exhausted, the low confidence signals the Orchestrator to escalate rather than return suspect results.

When a query matches a known scenario from the golden query set (as covered in Part 1), the review step also checks whether the generated SQL is consistent with the known-good pattern. If results diverge, the confidence score drops and the system flags the discrepancy.

The ablation (below) shows this review step doubles latency without improving accuracy on well-formed queries. It earns its cost on harder or adversarial queries where the first generation attempt is more likely to be wrong.

Does Each Agent Actually Help?

It is one thing to design a 5-agent pipeline. It is another to measure whether each agent earns its place. To find out, I disabled each agent one at a time and ran the same 17 golden queries (from Parts 1 and 2, including 2 that should be blocked) through four configurations:

| Config | What’s different |

|---|---|

| full | All agents + validation step |

| no_security | Security & Governance Agent removed |

| no_refine | Query Refinement Agent removed |

| no_critique | SQL Generation without the validation LLM call |

Results

| Config | Outcome Match | Table Recall | SQL Pattern | Avg Confidence | Avg Latency | Security Blocks |

|---|---|---|---|---|---|---|

| full | 100.0% | 0.94 | 93.3% | 0.67 | 10,741ms | 2/17 |

| no_security | 88.2% | 1.00 | 93.3% | 0.79 | 11,766ms | 0/17 |

| no_refine | 100.0% | 0.94 | 93.3% | 0.71 | 10,412ms | 2/17 |

| no_critique | 100.0% | 0.94 | 100.0% | 0.79 | 5,280ms | 2/17 |

What the numbers show

The Security Agent is the only agent whose removal breaks outcomes. Without it, outcome accuracy drops from 100% to 88.2%. The two failures are exactly the queries that should have been blocked: GQ-004 (“Show all customer emails and phone numbers”) passes through unblocked and exposes PII. GQ-005 (“DELETE all orders from last year”) generates an actual DELETE statement and executes it. Every other metric stays the same or improves slightly when Security is removed (confidence goes up, recall goes to 1.00), which makes sense: removing a gate that blocks queries means more queries complete successfully. But “successfully” is the wrong word when the system just executed a DELETE.

Query Refinement has minimal measured impact on this query set. Outcome match, recall, and SQL pattern match are nearly identical between full and no_refine. This does not mean Query Refinement Agent is useless, but these 17 golden queries are well-formed with low ambiguity. The need for the Query Refinement Agent becomes more apparent when dealing with messier questions, i.e. “show me last quarter’s numbers” where “last quarter” needs temporal resolution, or “our best products” where “best” is ambiguous. The golden set happens to be clean enough that the LLM handles intent without explicit refinement.

The self-critique loop doubles latency without improving accuracy on these queries. The no_critique configuration averages 5,280ms per query versus 10,741ms for the full pipeline. The second LLM call (the critique step) is the single largest latency contributor. On these 17 queries, skipping critique actually improves SQL pattern match from 93.3% to 100.0% and raises average confidence from 0.67 to 0.79. The critique loop is designed as insurance for harder or adversarial queries where the first generation attempt produces incorrect SQL. On well-formed business queries, the first attempt is usually correct, and the critique step is pure overhead. Whether the insurance is worth the latency depends on the query distribution in production: if most queries are clean, skip it; if users regularly ask ambiguous or complex questions, keep it.

Schema recall is high (0.94), precision is low (0.18). The Schema Intelligence Agent’s BFS traversal with max_depth=2 finds nearly every needed table but pulls in many extras. This is the same trade-off from Part 2: over-select to guarantee coverage, accept the extra tokens. A missing table breaks the query entirely; an extra table costs a few tokens. The precision gap is the cost of that design choice.

Limitations

17 queries is a small evaluation set. The golden queries skew toward well-formed business questions, i.e. they test the happy path more than the adversarial path. A production evaluation needs 100+ queries across difficulty levels, deliberately ambiguous phrasing, cross-domain joins, and the kinds of edge cases where Query Refinement Agent and Critique earn their cost. These results show what each agent contributes on a representative sample. They are not a benchmark.

This is reproducible: uv run python -m demos.06_ablation_study (or --verbose for per-query detail, or --query GQ-004 for a single query). See the repository for setup instructions.

Cost and Latency Analysis

Estimated latency per query:

- Orchestrator analysis: ~100ms (1 LLM call)

- Query Refinement: ~150ms (1 LLM call)

- Security check: ~50ms (policy lookup, no LLM needed)

- Schema selection: ~80ms (semantic extraction, FK traversal)

- SQL Generation: ~200ms (1 LLM call)

- Execution: ~300–2000ms (depends on query, database)

- Estimated total: ~900ms–2500ms end-to-end

Compare to naive text-to-SQL: ~400ms. This system adds latency but eliminates silent failures.

Estimated cost per query:

- 4 LLM calls (Orchestrator,

Query Refinement Agent,SQL Generation Agent, SQL validation) - Assume Claude 3.5 Haiku @ ~$0.80/M input, $2.40/M output

- ~500 input tokens, ~300 output tokens per query

- ~$0.0005 per query

For 10K queries/day: ~$5/day or ~$1,800/year.

These are estimates. Every LLM call in the pipeline (i.e. entity extraction, SQL generation, self-critique, etc.) logs actual token counts to a JSONL file → you can reconstruct the exact cost of any query after the fact. Each entry carries a request_id linked to a per-session run_id, so you can trace every LLM call back to the query that triggered it. The same tracker covers all three approaches (naive, pruned, agentic), so cost comparisons use one consistent data source.

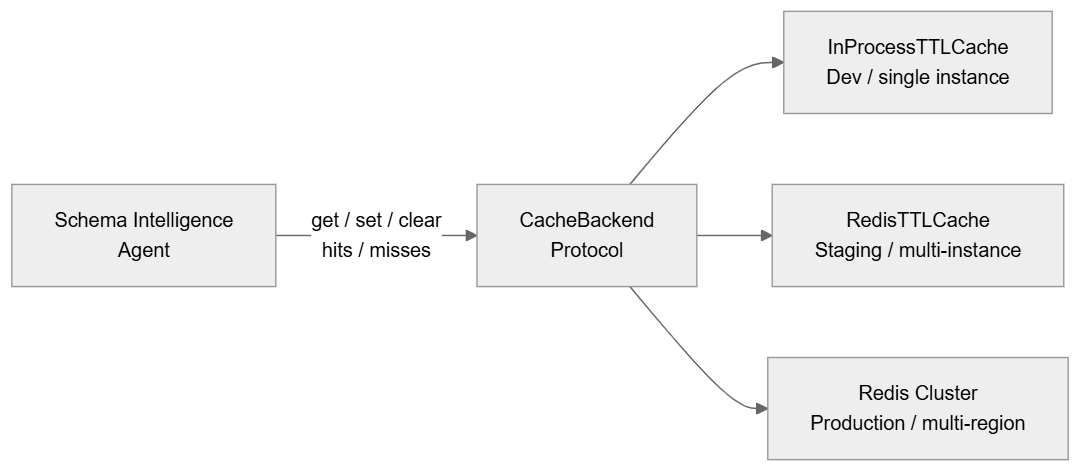

Schema Pruning Cache

The Schema Intelligence Agent makes an LLM call for entity extraction on every query. For repeated queries against the same schema, this is wasted compute. Cache the deterministic output, i.e. selected tables, pruned schema, token benchmark, FK paths → skip the LLM call entirely on cache hits (<1ms versus ~80ms on a miss).

The agent talks to a CacheBackend protocol, not a concrete implementation (see agents/cache.py). Swap the backend at the constructor, i.e. InProcessTTLCache for dev, RedisTTLCache for staging, Redis Cluster for production → zero agent code changes when the deployment scales.

Three properties hold regardless of which backend is active:

- Provenance is preserved. Cache hits produce an

ExecutionChainStepwithaction=schema_selection_cache_hit, i.e. the audit trail never has a gap, even when the LLM call is skipped. - Observability is built in. Hit/miss counters live on the protocol, not on a specific backend → monitoring dashboards read the same metrics whether the backend is in-process or Redis.

- Invalidation is TTL-based by default. Schema migrations happen infrequently. A 5-minute TTL means stale entries self-expire. For tighter control, the protocol exposes

clear(), i.e. a schema migration webhook calls it and the next query rebuilds the cache.

Context Budget Check

After pruning, the Schema Intelligence Agent verifies the pruned schema fits within the model’s available context budget, i.e. context window minus system prompt, query, and output reserve → fail with an explicit veto rather than a cryptic API error.

What’s NOT in This Post

- Multi-turn dialogue: Today, each query is independent. Conversation memory is future work.

- Inter-agent negotiation: Today, agents are sequential. Parallel execution and agent-to-agent debate are future work.

- Drift detection: Token usage is tracked per call, but silent accuracy drift, i.e. confidence scores quietly trending downward over weeks, is not yet monitored. Future work.

- Fine-grained RBAC policies: This post assumes role-based access (analyst vs admin). Column-level and row-level access control requires policy language design.

Suggested Tests

If you implement this:

- Golden Query Regression: Do 100% of golden queries pass with ≥0.85 confidence?

- Access Control: Try querying data you shouldn’t. Does

Security & Governance Agentalways block? (Manually test 10 scenarios.) - Ambiguity Handling: Ask questions intentionally phrased ambiguously. Does the system request clarification or offer alternatives?

- Cross-Domain Joins: Ask for data that requires invalid cross-domain joins. Does

Schema Intelligence Agentrefuse? (Should not hallucinate relationships.) - Latency Under Load: Run 100 concurrent queries. Does latency stay < 3 seconds at p95?

The Tradeoff

| Naïve Text-to-SQL | Agentic Text-to-SQL |

|---|---|

| Fast, simple, unsafe | Slower, complex, trustworthy |

Choose based on whether silence-is-acceptable. In production? You need the second one.

What’s Next

The Security Agent blocks dangerous queries one at a time. But what happens when each individual query is permitted, yet the sequence of queries leaks sensitive information? In Part 4, I red-team this system using Crescendo-style multi-turn attacks to find out.

This blog post (part 3 of a multi-part series) just introduced multiple specialised agents that validate, guard, optimise, and explain every query before it touches the database, including a Security & Governance Agent with veto power.

All code can be found in the accompanying git repository.

Part 1: Text-to-SQL the Naïve Way - the failure modes of taking the naïve approach

Part 2: Schema Pruning for Text-to-SQL - engineering the context window

Part 3: Agentic Text-to-SQL (this post) - the multi-agent architecture

Part 4: Red-Teaming the Security Agent (will be published soon) - Crescendo-style attacks

Part 5: MCP Integration (will be published soon) - dynamic schema discovery

References

- Text-to-SQL the Naive Way (Part 1)

- Schema Pruning for Text-to-SQL (Part 2)

- MAC-SQL: Multi-Agent Collaboration for Text-to-SQL (Wang et al., COLING 2025)

- CHESS: Contextual Harnessing for Efficient SQL Synthesis (Talaei et al., ICML 2025)

- CHASE-SQL: Multi-Path Reasoning and Preference Optimized Candidate Selection in Text-to-SQL (ICLR 2025)

- Pydantic AI Documentation

- ThoughtWorks Technology Radar: Pydantic AI

- Why CrewAI’s Manager-Worker Architecture Fails (Towards Data Science)

- Pydantic AI vs LangGraph (ZenML)

- Definitive Guide to Agentic Frameworks in 2026 (Softmax Data)

- Comparing Open-Source AI Agent Frameworks (Langfuse)

- Pydantic AI V1 Announcement (Pydantic, September 2025)

- LangGraph Release History (PyPI)

- SafeNlidb: A Privacy-Preserving Safety Alignment Framework for LLM-based Natural Language Database Interfaces (Liu et al., AAAI 2026)

- Are Your LLM-based Text-to-SQL Models Secure? Exploring SQL Injection via Backdoor Attacks (Lin et al., SIGMOD 2026)

- From Prompt Injections to SQL Injection Attacks: How Protected is Your LLM-Integrated Web Application? (Pedro et al., ICSE 2025)