In Part 1, the naïve Text-to-SQL approach sent 8,414 tokens of schema context to generate 16 tokens of SQL - a 526:1 input-to-output ratio. This post engineers the fix: a deterministic schema pruner - context engineering at the schema layer - that selects only the tables relevant to each query, with no LLM dependency.

TL;DR: A deterministic schema pruner, using FK graph traversal and 3-layer entity resolution, reduces context by 93% on average across 15 benchmark queries against a 35-table schema. Mean recall is 1.00 (every needed table is selected), mean precision is 0.93 (minimal extra tables). No LLM calls. Fully reproducible. The implementation is ~500 lines of Python with one external dependency (

tiktoken).

The Problem: 526:1

Part 1 established the token waste problem with a concrete measurement. For the simplest possible query - “Show all active products in the Electronics category” - the naïve approach sends the full 35-table DDL as context:

| Context strategy | Input tokens | vs. Answer |

|---|---|---|

| Full schema DDL (naïve approach) | 8,414 | 526x |

| CREATE TABLE blocks only | 5,230 | 327x |

| Only the products table | 166 | 10x |

Note on token counts: All benchmarks in this post use 5,164 tokens (CREATE TABLE blocks only from the committed schema, measured with

tiktoken) as the baseline. Part 1 reported 8,414 for full DDL including comments, DROP statements, and operational commands; stripping those to CREATE TABLE blocks only is itself a 38% reduction - a point from Part 1 that the benchmarks below build on.

The query only needs one table. The naïve approach sends all 35. That is not just expensive - at enterprise scale (200-500+ tables), the schema exceeds most models’ context windows entirely and the approach becomes technically impossible.

But the gap between 5,230 tokens (every CREATE TABLE block) and 166 tokens (just the products table) suggests that if we can identify which tables a query needs, we can cut context by 95%+ per query.

The question is: how do you identify the right tables from a natural language query, without using an LLM?

The Idea: FK Graph Pruning

The schema is not a flat list of tables. It is a graph. Foreign key relationships define edges between tables, and those edges encode which tables are structurally related.

Given a query like “Show total revenue by product category”, the approach is:

- Resolve the query to seed tables - tables directly referenced by the natural language

- Traverse the FK graph from those seeds to find structurally connected tables

- Prune the schema to only the selected tables

For the revenue query:

- “revenue” resolves to

ordersandorder_items(seed tables) - “product” resolves to

products(seed table) - All three are FK-connected (

order_items→orders,order_items→products), so no additional traversal needed - Result: 3 tables instead of 35 - a 91% token reduction

The critical insight is that entity resolution can be deterministic. Business terminology maps predictably to database tables: “revenue” means orders, “shipping” means shipments, “quality” means quality inspections. These mappings don’t change between queries. They can be compiled once and applied without an LLM call.

Other approaches to table selection exist: [1] embedding-based retrieval - encode table descriptions as vectors, retrieve nearest matches (RAG for schema selection), [2] LLM-powered extraction - ask a model to identify relevant tables directly. Both work, but both add latency, cost, and infrastructure dependencies. Embedding-based retrieval adds ~50-200ms per query for vector lookup plus requires maintaining an embedding index; LLM extraction adds ~500-2000ms plus API cost. The deterministic pruner runs in <1ms.

Deterministic keyword resolution is faster, fully reproducible, and free. For the majority of queries where business terms map predictably to tables, it is the better trade-off.

Building the Pruner

The implementation has two core components: a 3-layer entity resolver that identifies seed tables from natural language, and a BFS traversal that expands seeds through the FK graph.

3-Layer Entity Resolution

The resolver processes the query through three layers, each catching what the previous one misses:

def resolve_tables(self, query: str) -> Set[str]:

seeds: Set[str] = set()

query_lower = query.lower()

query_words = set(re.findall(r"\w+", query_lower))

resolved_words: Set[str] = set()

# Layer 1: Direct table name matching

for table in self._all_tables:

singular = _singularize(name=table)

if table in query_lower:

seeds.add(table)

resolved_words.add(table)

elif singular in query_lower:

seeds.add(table)

resolved_words.add(singular)

# Layer 2: Business entity mapping

for term, tables in ENTITY_MAP.items():

if term in query_words:

seeds.update(tables)

resolved_words.add(term)

# Layer 3: Column name matching

for col_name, tables in self._column_index.items():

if len(col_name) < 6:

continue

if col_name in resolved_words:

continue

if col_name in query_lower:

seeds.update(tables)

if not seeds:

seeds = {"orders", "products", "customers"}

return seeds

Layer 1 catches direct references: “Show all orders” → orders. It handles both plural table names and their singular forms (“each product” → products).

Layer 2 maps business terminology to tables. The ENTITY_MAP has ~30 entries mapping terms like “revenue” to {orders, order_items}, “shipping” to {shipments}, “profit” to {profitability_analysis}, and so on. This is where domain knowledge lives. A marketing analyst asking about “campaign conversion rates” doesn’t know (or care) that the data lives in campaigns and conversion_funnels. The entity map bridges that gap.

Layer 3 catches column-name references that layers 1 and 2 missed. If the query mentions unit_cost, and unit_cost is a column in raw_materials, that table gets added as a seed. A stop-list excludes generic column names (id, status, name, created_at) that would match too broadly.

One subtlety: Layer 3 skips words already resolved by layers 1-2. Without this, “revenue” would match both the entity map (Layer 2 → orders, order_items) and a column name in profitability_analysis (Layer 3), pulling in an unrelated table. The resolved_words set prevents this double-counting.

FK Graph Traversal

Once seed tables are identified, find_minimal_tables runs BFS through the FK graph, bounded by a max_depth parameter that controls how many FK hops to traverse. Higher depth finds more structurally related tables but risks pulling in too many. The benchmarks below show that depth=0 (seeds only, no traversal) achieves 100% recall on all test queries - the 3-layer resolver is strong enough that BFS expansion is rarely needed.

This is a useful result: it means the pruner’s accuracy comes from entity resolution quality, not graph traversal depth. For well-formed business queries, the right tables are identified directly from the natural language. BFS is insurance for edge cases where the query doesn’t explicitly mention a needed join table - for instance, if a query mentions “Singapore products” but not “manufacturing” or “production,” the resolver finds products directly but needs production_runs (FK-connected to products) for the Singapore filter. BFS at depth=1 would catch it.

Parsing the FK Graph

The pruner builds its FK graph by parsing DDL at initialization. It handles both inline FOREIGN KEY constraints and ALTER TABLE ADD FOREIGN KEY statements:

# Parse inline FOREIGN KEY per CREATE TABLE block

fk_in_block = re.compile(

r"FOREIGN\s+KEY\s*\((\w+)\)\s*REFERENCES\s+(\w+)\s*\((\w+)\)",

re.IGNORECASE,

)

for table_name, ddl_block in self._table_ddl.items():

for match in fk_in_block.finditer(ddl_block):

self._add_fk_edge(table_name, match.group(1), match.group(2), match.group(3))

Each FK creates a bidirectional edge. If orders.customer_id references customers.customer_id, both orders → customers and customers → orders are added. This ensures BFS can traverse in either direction.

For the 35-table schema, this produces 59 FK edges - substantial connectivity that BFS can exploit when needed.

Benchmarks

I benchmarked the pruner against 15 golden queries spanning simple single-table lookups, multi-table joins, cross-domain analytics, and complex inventory queries. Each query runs through resolve_tables → find_minimal_tables (depth=0) → prune_schema, measuring token reduction, precision, and recall against expected tables. Precision measures whether all selected tables are needed; recall measures whether all needed tables are selected.

| ID | Query | Tokens | Reduction | P | R |

|---|---|---|---|---|---|

| GQ-001 | How many orders were placed last month? | 5,164 → 157 | 97.0% | 1.00 | 1.00 |

| GQ-002 | Show total revenue by product category | 5,164 → 465 | 91.0% | 1.00 | 1.00 |

| GQ-003 | List all products with their current inventory levels | 5,164 → 239 | 95.4% | 1.00 | 1.00 |

| GQ-006 | Show me monthly sales trends for the last 6 months | 5,164 → 365 | 92.9% | 1.00 | 1.00 |

| GQ-007 | Which employees have the highest number of quality inspections? | 5,164 → 218 | 95.8% | 1.00 | 1.00 |

| GQ-008 | Show me the best products | 5,164 → 100 | 98.1% | 1.00 | 1.00 |

| GQ-009 | What is the average order value by customer segment? | 5,164 → 471 | 90.9% | 0.67 | 1.00 |

| GQ-010 | Show production run details for product P001 | 5,164 → 313 | 93.9% | 1.00 | 1.00 |

| GQ-011 | Show shipment status and delivery partner details | 5,164 → 307 | 94.1% | 1.00 | 1.00 |

| GQ-012 | What is the total cost allocation per department? | 5,164 → 453 | 91.2% | 0.50 | 1.00 |

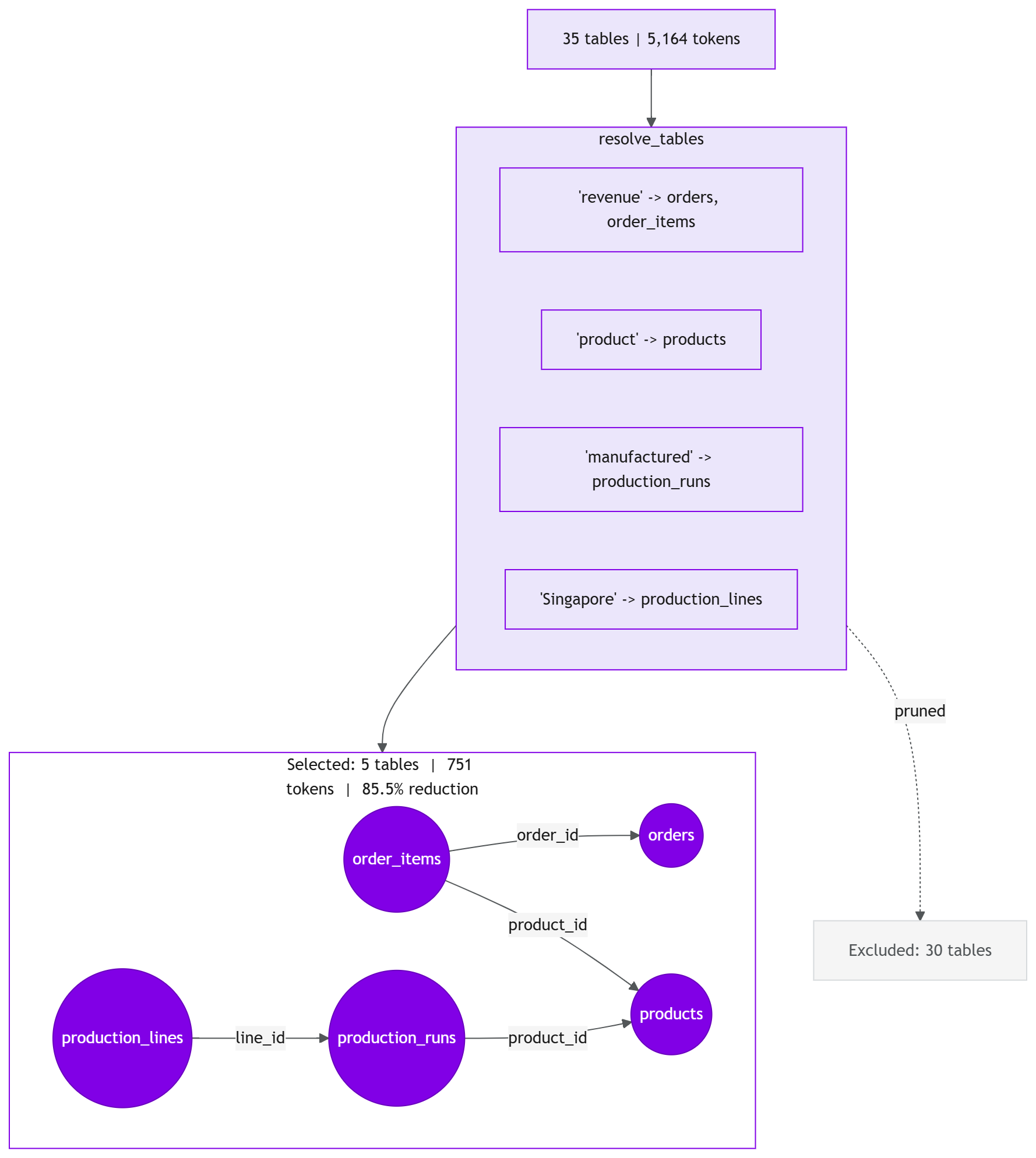

| GQ-013 | Show revenue by product for products manufactured in Singapore | 5,164 → 751 | 85.5% | 1.00 | 1.00 |

| GQ-014 | What is the conversion rate for each marketing campaign? | 5,164 → 319 | 93.8% | 1.00 | 1.00 |

| GQ-015 | Which warehouses have products below their safety stock reorder point? | 5,164 → 658 | 87.3% | 0.80 | 1.00 |

| GQ-016 | Show supplier reliability scores and their raw material lead times | 5,164 → 189 | 96.3% | 1.00 | 1.00 |

| GQ-017 | Show me monthly profit margins by product category | 5,164 → 389 | 92.5% | 1.00 | 1.00 |

Summary

| Metric | Value |

|---|---|

| Mean token reduction | 93.0% |

| Median token reduction | 93.8% |

| Mean precision | 0.93 |

| Mean recall | 1.00 |

| Total tokens across 15 queries | 77,460 → 5,394 (72,066 saved) |

| Queries failing recall threshold (< 0.8) | 0 |

Recall is 1.00 across all 15 queries - every table needed for every query was selected. This is the metric that matters most: a missing table means the LLM cannot generate correct SQL.

Precision is 0.93 - three queries selected extra tables beyond what was strictly needed (GQ-009, GQ-012, GQ-015). This is the right trade-off. Including an extra table costs a few tokens; missing a needed table breaks the query entirely.

Ablation: What Each Layer Contributes

How much does each resolver layer contribute? To answer this, I ran all 15 queries through three configurations - Layer 1 only, Layers 1+2, and all three layers:

| Config | Mean Precision | Mean Recall | Queries with R < 1.0 |

|---|---|---|---|

| L1 (table names only) | 0.93 | 0.57 | 12/15 |

| L1+L2 (+ entity map) | 0.99 | 1.00 | 0/15 |

| L1+L2+L3 (+ column matching) | 0.93 | 1.00 | 0/15 |

Layer 1 alone fails 12 of 15 queries - direct table name matching cannot resolve business terms like “revenue,” “quality,” or “profit.” Layer 2 (the ENTITY_MAP) is the critical component: it lifts recall from 0.57 to 1.00 and eliminates all failures. Layer 3 (column name matching) adds no recall on these 15 queries but costs 6 points of precision from extra table matches. Layer 3 remains valuable as insurance for queries referencing column names not covered by the entity map, but the ablation confirms that Layer 2 carries the resolver.

This is reproducible: python demos/05_schema_pruning_ablation_study.py (or --verbose for per-query detail).

A closer look at GQ-012. The query “What is the total cost allocation per department?” needs cost_allocations and departments. The pruner selected those two plus production_lines and warehouses (precision: 0.50). The cause: Layer 3’s substring matching found “location” inside “allocation”, pulling in every table with a location column. The cost is 453 tokens instead of ~280 - still a 91% reduction, but it illustrates the precision ceiling of substring matching. GQ-009 has a similar pattern: “segment” (a column in customer_lifetime_value) matched via Layer 3, adding one extra table. Both cases confirm the design choice: substring matching catches real references that exact-match would miss, at the cost of occasional false positives.

The hardest query is GQ-013: “Show revenue by product for products manufactured in Singapore”. It spans 5 tables across 3 domains (E-commerce, Manufacturing, Analytics). The pruner resolves all 5 correctly - orders, order_items, products, production_runs, production_lines - still achieving an 85.5% reduction.

End-to-End Validation

The benchmarks above validate the pruner in isolation — does it select the right tables? A separate validation script tests the full pipeline:

- For each golden query, generate SQL with the full schema and again with the pruned schema

- Execute both against the database and compare outcomes

- If pruning breaks SQL generation, this is where it shows up

- Call order is randomised per query to avoid first-call latency bias (TCP/TLS handshake penalty)

The 526:1 Problem, Solved

Recall the motivating example from Part 1: “Show all active products in the Electronics category” needed 16 tokens of SQL but received 8,414 tokens of schema context.

With pruning, that same query receives 100 tokens of context (just the products table’s CREATE TABLE block). The ratio drops from 526:1 to 6:1.

Sending all 35 tables when the LLM needs one is context pollution - irrelevant schema diluting the signal for correct SQL generation. The pruner implements context engineering at the schema layer: finding the minimal effective context for each query, rather than sending everything on every call. The 526:1-to-6:1 improvement is a direct measure of its effect.

The token reduction maps directly to cost. Schema context alone, at 10,000 queries per day:

| Model | Price (per 1M input) | Before pruning | After pruning | Annual saving |

|---|---|---|---|---|

gpt-4o-mini |

$0.15 | $7.75/day | $0.54/day | $2,632 |

gpt-4o |

$2.50 | $129/day | $9.00/day | $43,800 |

These figures represent savings on schema context alone; total per-query cost includes system prompt and response tokens, which are unaffected by pruning. The savings scale linearly with query volume. At 100K queries per day on gpt-4o, pruning saves over $430K per year - on a 35-table schema.

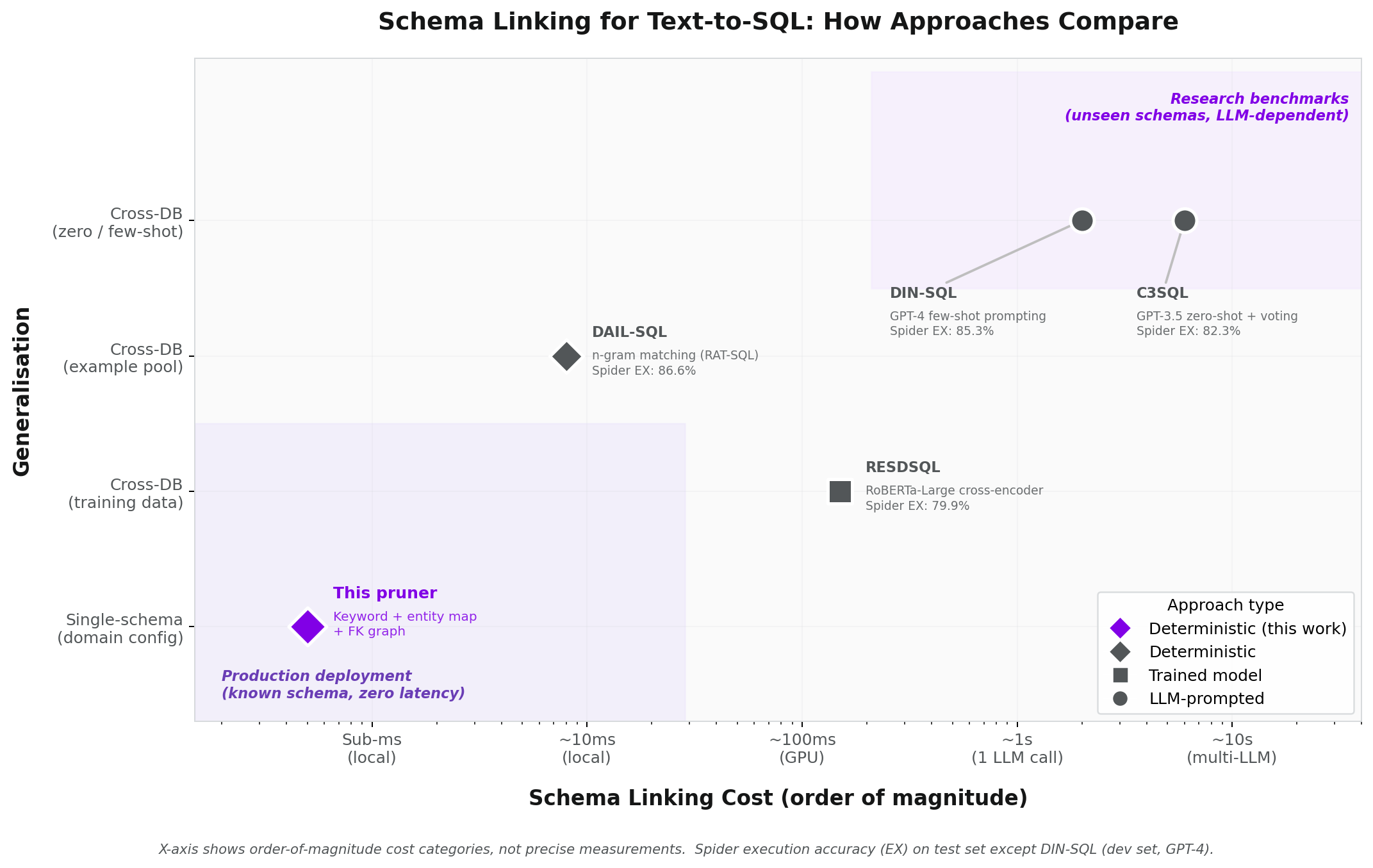

Related Work: Schema Linking

The task this pruner performs, identifying which tables a natural language query needs, has a name in the research literature: schema linking. It is a well-studied subproblem of text-to-SQL. Several recent systems tackle it, and the approaches fall into three categories: prompt an LLM, train a dedicated model, or match deterministically.

Most published approaches use an LLM for schema linking. DIN-SQL prompts GPT-4 with few-shot examples to classify which tables and columns are relevant, then chains three more LLM calls for query classification, SQL generation, and self-correction. C3SQL goes further: it prompts GPT-3.5 ten times for table recall, takes a majority vote, then generates 20 candidate SQL queries and picks the most common result. Both achieve strong accuracy on Spider (DIN-SQL: 85.3%, C3SQL: 82.3%), but both pay for it in latency and API cost. DIN-SQL needs four sequential LLM calls per query. C3SQL needs roughly forty.

RESDSQL takes a different route: train a dedicated RoBERTa-Large cross-encoder to score table relevance, then feed only the top-ranked tables to a T5-3B model for SQL generation. The decoupling principle, letting a specialised component handle schema selection rather than burdening the SQL generator with the full schema, is the same principle behind this pruner. The difference is in the mechanism. RESDSQL trains a neural classifier on 7,000 labelled examples and needs a GPU at inference time. This pruner uses deterministic rules with a hand-curated entity map and runs on anything.

The most interesting comparison is DAIL-SQL, because its schema linking is also deterministic. It uses n-gram matching adapted from RAT-SQL, checking whether question words overlap with table and column names. This is conceptually close to Layer 1 of this pruner. DAIL-SQL pairs this deterministic linker with carefully selected few-shot examples and GPT-4, achieving 86.6% on Spider, the highest of the four. That result matters: the top-performing system on Spider uses deterministic schema linking. Pattern matching works for table selection, even at the top of the leaderboard.

Where this pruner goes further than DAIL-SQL’s n-gram matcher is Layer 2. The entity map resolves business terminology that no surface-level matcher can catch: “revenue” to orders and order_items, “quality” to quality_inspections, “profit” to profitability_analysis. The ablation confirms this is critical. Layer 1 alone (the equivalent of n-gram matching) achieves 0.57 recall on the benchmark queries. Layer 2 lifts it to 1.00. The cost is a hand-curated mapping, roughly 30 entries for 35 tables, that must be maintained per schema.

This pruner has not been evaluated on Spider. Spider measures cross-database generalisation: the ability to handle schemas never seen during development. This pruner is designed for single-schema production deployment, where the entity map is curated once for a known business domain and amortised across all queries. Tables may be added over time, but the domain is stable. For this application, cross-database generalisation is not a requirement. The schema is known, the entity map is maintainable, and the trade-off is zero-latency schema linking with no model dependency.

DIN-SQL: Pourreza & Rafiei, NeurIPS 2023. RESDSQL: Li et al., AAAI 2023. C3SQL: Dong et al., 2023. DAIL-SQL: Gao et al., VLDB 2024.

Limitations

This is a deterministic system. It has predictable failure modes:

Novel terminology. If a user asks about “throughput” meaning production output, the entity map won’t match it. The entity map has ~30 entries covering common business terms, but it cannot anticipate every synonym.

Implicit table references. A query like “Which managers approved the most expenses?” requires understanding that “managers” are employees with a certain role, and “expenses” map to cost allocations. Neither word appears in the entity map or column names.

Schema evolution. When new tables are added or renamed, the entity map needs manual updates. The column index rebuilds automatically from DDL, but business term mappings do not. At enterprise scale, the entity map grows sublinearly: many tables share business terminology (e.g., “revenue” maps to the same tables regardless of how many other tables exist), so a 500-table schema does not require 500 mappings - but the maintenance burden is real.

These limitations are exactly why Part 3 introduces LLM-powered entity extraction on top of deterministic pruning. The deterministic layer handles the 80-90% of queries where business terms map predictably to tables. The LLM layer handles the rest - synonyms, implicit references, and novel terminology - while the deterministic layer provides a fast, reproducible baseline that the LLM can fall back to if extraction fails.

Try It

The full implementation, tests, and benchmark script are in the companion repository.

git clone https://github.com/nirmalyaghosh/text-to-sql.git

cd text-to-sql

uv pip install -e .

# Run the benchmark

python demos/05_schema_pruning_benchmark.py

# Verbose mode (shows per-query table details)

python demos/05_schema_pruning_benchmark.py --verbose

# Single query

python demos/05_schema_pruning_benchmark.py --query GQ-013

# Run the tests

pytest tests/test_schema_pruner.py -v

No database connection needed. No API keys. No LLM calls. The pruner works entirely from DDL text.

What’s Next

The pruner demonstrated here is a standalone module - no agent framework, no LLM dependency, no infrastructure requirements. It is the foundation that the agentic system builds on.

In Part 3, I introduce a 5-agent architecture where the Schema Intelligence Agent uses this pruner as its first pass, then falls back to LLM-powered entity extraction for queries that deterministic matching cannot resolve.

Part 1: Text-to-SQL the Naïve Way - the failure modes

Part 2: Schema Pruning for Text-to-SQL (this post) - engineering the context window

Part 3: Agentic Text-to-SQL - the multi-agent architecture

Part 4: Red-Teaming the Security Agent (will be published soon) - Crescendo-style attacks

Part 5: MCP Integration (will be published soon) - dynamic schema discovery

Related: Schema Linking for Text-to-SQL (will be published soon) - extended benchmarks on Spider

ℹ️ Version History

- 26 Feb 2026 : Post published

- 28 Feb 2026 : Added Related Work section

- 02 Mar 2026 : Added End-to-End Validation subsection

- 08 Mar 2026 : Added link to Part 3